Speaking reliability-wise, parallel, means any of the elements in parallel structure permit the system to function. This does not mean they are physically parallel (in all cases), as capacitors in parallel provide a specific behavior in the circuit and if one capacitor fails that system might fail.

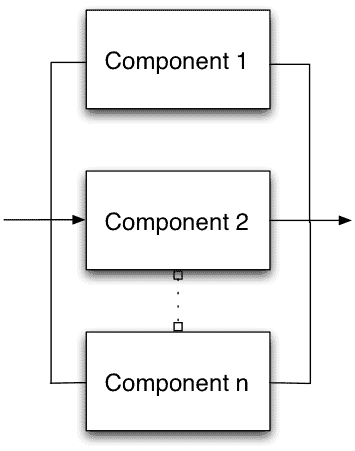

In this simple drawing, there are n components in parallel and any one component is needed for the system to function.

In this simple drawing, there are n components in parallel and any one component is needed for the system to function.

If component #2 fails, the others will permit the system to functions.

Simple. And, very useful. This construction permits the improvement of reliability overall, even above the reliability of the individual components. Unlike series system where the weakest component limits the reliability, here by adding redundancy the system reliability improves.

Consider a two component parallel system. If both components are both working, then the system is working. If either component 1 or 2 fails, the system is still working. If and only if both components fail, then the system fails. Unlike a series system where any one failure causes a system failure, in this simple example, two failure events have to occur before the system fails.

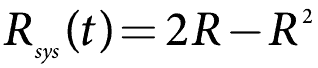

What is the chance of having two failures? The formula is based on the probability of component 1 or component 2 operating. Without doing the derivation, we can write the reliability of the 2 component parallel system as:

This get’s very complicated quickly with more than three components in parallel. Before exploring another way to calculate parallel systems, there is a special case situation to mention first.

When the components in parallel are the same (reliability wise), then the above simplifies to

Which should be obvious.

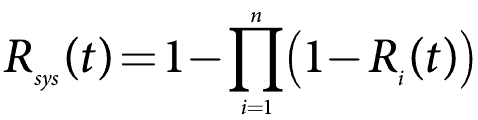

Often it’s easier to do parallel system calculations using the unreliability, or 1 – R(t). This is also the CDF or F(t). The math is now more like a series system with one correction. For the general case, the system reliability formula for a parallel system becomes

So, let’s say we have five components with Reliability at one year of use, R(1), at 90%, or 0.9. Calculate the system reliability.

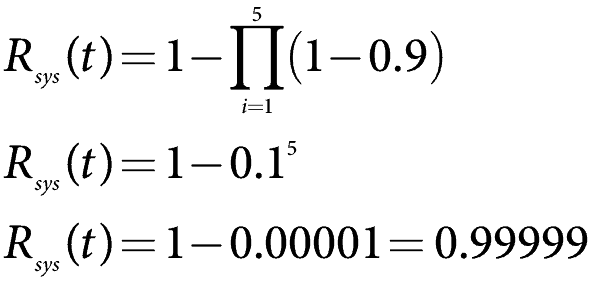

Using the above formula and setting the reliability of each element at 0.9, we find

which is very reliable.

It’s expensive to add redundant parts to a system, yet in some cases, it is the right solution to create a system that meets the reliability requirements. Of course, actual systems have many variations and complications over simply setting components up in parallel. Load sharing, hot, warm, or cold standby, switching or voting systems, and many others can complicate the construction of a parallel system.

Using components in parallel is the only way to increase the system reliability over the limits of individual component reliability.

Related:

Reliability Block Diagrams Overview and Value (article)

Reliability Apportionment (article)

k out of n (article)

Ask a question or send along a comment.

Please login to view and use the contact form.

Ask a question or send along a comment.

Please login to view and use the contact form.

Dear sir,

I want to calculate total breakdown time and breakdown number between A and B points.

There are 3 machines connected parallel.

Hi Fred,

First of all I would like to thank you very much to share your knowledge.

I have a question regarding the failure definition of n elements in a parallel system reliability. As a failure can be either open circuit of short circuit the element failure in a parallel scheme will have different impact: if a short circuit on one element and all other sounds, then the system is in short so considered fail; while the open fail of one element will not affect the overal system reliability. Can you clarify this point ?

Complementary and second question: what if we are studying parametric drifts of a n parallel elements with a given failure criteria characterized by a WEIBULL law for each element (same law or different WEIBULL parameters for each one) ? Do you know some reference or book addressing this question for a serie or parallel systems reliability?

Thank you for your advise. Alain.

Hi Alain,

Yep, if the items in parallel and one has a short that provides a short past the parallel structure, then it’s a single point failure causing a system failure. It would take a bit more work to model this situation, yet possible to estimate the system availability if one could define the probability of shorting…

And, I do not know of a good reference for the drift question – seems there may be good technical paper in that topic.

Cheers,

Fred